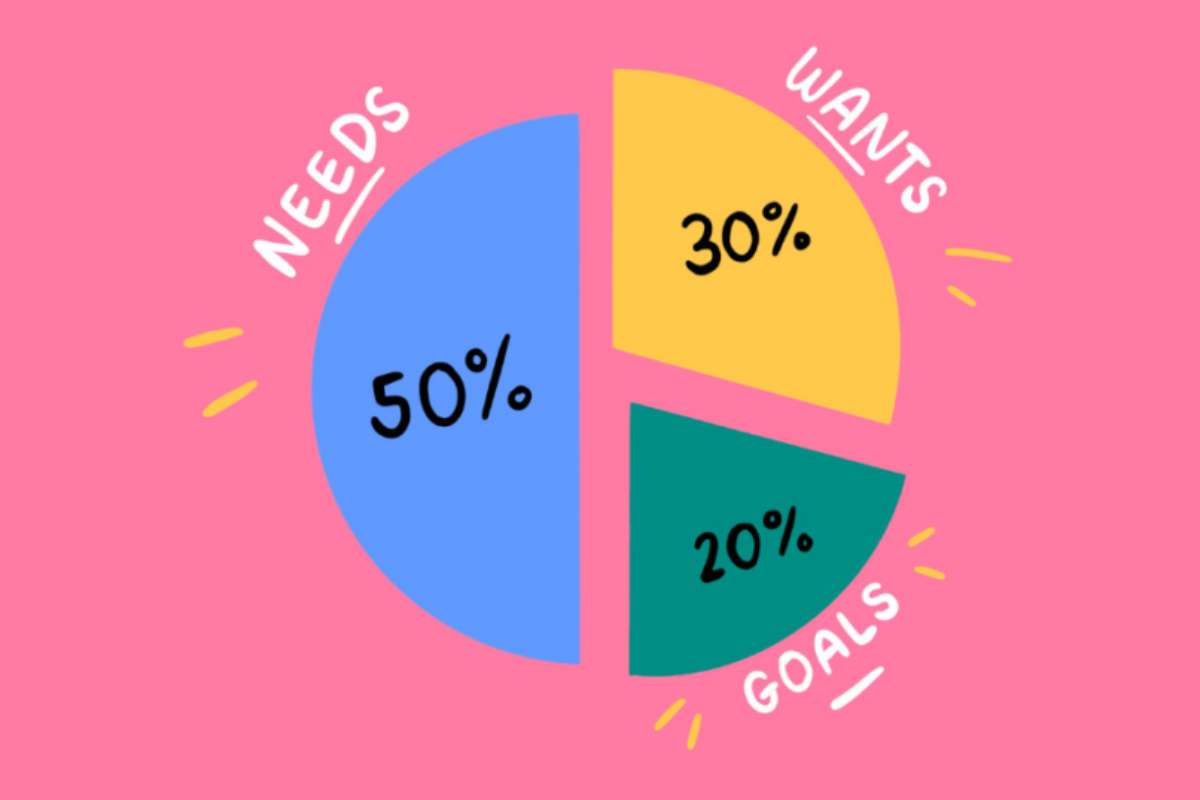

Your Financial Goals by Age: A Complete Roadmap to Build Wealth at Every Life Stage

Wondering if your money is on track for your age? This breakdown of financial goals by age reveals what most people miss and the steps that quietly build long-term wealth.